AI infrastructure services provide the essential hardware, software, networking, and storage required to build, train, and deploy artificial intelligence models at scale. While many businesses default to public hyperscalers or temporary rentals, this guide explores why managed private cloud solutions often deliver superior cost predictability, data sovereignty, and performance.

Table of Contents

ToggleTL;DR

- The Hidden Costs: Public cloud AI infrastructure often comes with unpredictable data egress fees and multi-tenant performance bottlenecks.

- The Private Cloud Advantage: Managed private cloud offers flat-rate pricing, dedicated hardware, and strict data sovereignty for compliance-sensitive industries.

- The Equinix Differentiator: Partnering with Accrets on Equinix infrastructure provides the security of a private data center with the high-speed, carrier-neutral connectivity of a public cloud.

- The Action Plan: Enterprises must audit their workload types, calculate data gravity, and define compliance needs before choosing a deployment model.

Most enterprises do not calculate the true cost of scaling their AI ambitions until the cloud bill arrives. As companies move from experimental artificial intelligence projects to full-scale enterprise deployment, the foundational infrastructure they choose becomes the ultimate bottleneck or the ultimate catalyst.

What are AI infrastructure services?

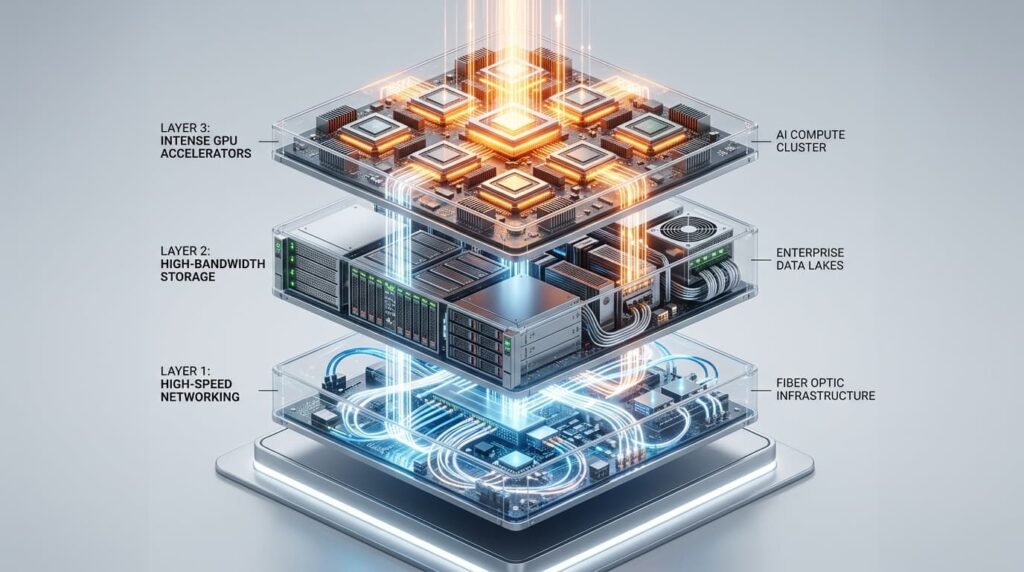

AI infrastructure services encompass the specialized hardware and software components, including high-performance GPUs, low-latency networking, high-bandwidth storage, and dedicated MLOps platforms, required to train, deploy, and scale artificial intelligence workloads efficiently, securely, and cost-effectively within an enterprise environment.

While the initial instinct for many IT leaders is to spin up instances on massive public hyperscalers or utilize temporary public GPU rental platforms, the reality of production-grade AI is far more complex. Renting raw compute power is fantastic for short-term experimentation. However, when you factor in unpredictable data egress fees, multi-tenant latency, and stringent compliance requirements, the math changes rapidly. That is exactly why forward-thinking enterprises are pivoting their strategies to secure AI infrastructure services through managed private cloud environments, combining the agility of the cloud with the uncompromised security of dedicated hardware.

The Infrastructure Layer Most AI Strategies Ignore (And Why It Dictates Success)

When business leaders discuss AI, the conversation almost exclusively revolves around Large Language Models (LLMs), parameter counts, and software applications. What gets completely ignored is the physical and virtualized foundation that makes these applications function without crashing.

Enterprise AI infrastructure requires far more than just renting a GPU by the hour. It demands a highly synchronized orchestra of technical components.

First, there is the compute balance. Not every AI workload requires an array of top-tier H100 GPUs. Training a foundational model is vastly different from running daily inference tasks, which can often be handled efficiently by a carefully balanced mix of optimized CPUs and lower-tier GPUs. Second, there is the massive challenge of high-bandwidth storage. AI models devour data. If your storage architecture cannot feed data to your compute nodes fast enough, your highly expensive GPUs will sit idle, burning budget while waiting for information.

Third, low-latency networking is non-negotiable. The internal transfer speeds between compute and storage nodes dictate the efficiency of the entire system. Finally, an integrated MLOps layer is required to manage the deployment, monitoring, and lifecycle of the models themselves. When organizations try to piece this together using disparate cloud infrastructure as a service components from a public vendor, they often end up with a fragmented, highly inefficient architecture. Managed private AI infrastructure ensures that compute, storage, and networking are architected from the ground up specifically for the unique demands of heavy AI workloads.

The TCO Matrix: Public Cloud GPU vs. Managed Private AI Infrastructure

To make an informed decision, enterprise IT leaders must look beyond the hourly compute rate and calculate the Total Cost of Ownership (TCO) across the lifecycle of an AI deployment. Let us compare the three dominant models: Public Hyperscalers (AWS, Google Cloud), Public GPU Rental Platforms, and Managed Private Cloud.

1. Public Hyperscalers (AWS, GCP, Azure)

- The Appeal: Massive global presence, infinite scalability on paper, and an ecosystem of proprietary AI tools.

- The Hidden Costs: Hyperscalers lock you into their ecosystem. The real financial bleed comes from data egress fees, moving your massive training datasets in and out of their walled gardens. Additionally, reserved instances require long-term financial commitments that may not align with your evolving AI needs.

2. Public GPU Rental Platforms

- The Appeal: Cost-effective, short-term access to high-end hardware without the massive overhead of a hyperscaler. Excellent for quick prototyping or academic research.

- The Hidden Costs: These platforms often lack enterprise-grade Service Level Agreements (SLAs). They are essentially raw infrastructure. You are entirely responsible for the security, networking architecture, and MLOps integration. Furthermore, availability can be volatile; when market demand spikes, securing the compute you need when you need it becomes a gamble.

3. Managed Private AI Infrastructure

- The Appeal: Predictable, flat-rate pricing without surprise data egress fees. Dedicated hardware ensures you are not competing for bandwidth in a multi-tenant environment.

- The Real Value: The TCO over a 12-to-36-month period for consistent AI workloads (like continual fine-tuning or heavy inference) is substantially lower than public options. Because you are not paying the hyperscaler premium or facing unpredictable usage spikes, your budget remains static. This predictable financial model is exactly why so many CFOs and CIOs are exploring private cloud hosting services specifically tailored for generative AI and machine learning initiatives.

The Governance Hurdle: Why Compliance-Sensitive Enterprises Cannot Rely Solely on Public Cloud

Financial services, healthcare organizations, and government agencies operate under a fundamentally different set of rules than a typical tech startup. For these compliance-sensitive sectors, the primary barrier to AI adoption is not just cost or talent; it is data sovereignty, security, and strict regulatory governance.

When you train an AI model on proprietary corporate data, whether that is patient health records, financial transaction histories, or classified intellectual property, that data becomes part of the model’s weights and biases. Running these highly sensitive workloads in a multi-tenant public cloud environment introduces unacceptable risk profiles. What happens if a misconfiguration exposes your training data? How do you guarantee data residency when a public cloud provider dynamically shifts workloads across availability zones?

Public hyperscalers offer compliance certifications, but the shared responsibility model means that if your team makes an error, the liability falls entirely on your organization. Managed private AI infrastructure solves this governance hurdle natively. By deploying on dedicated, single-tenant hardware, enterprises retain absolute control over where their data lives, how it is processed, and who has access to it. It physically isolates the AI workload from the public internet and other corporate environments. For organizations struggling to map their strict regulatory frameworks to modern generative AI tools, partnering with experts in cloud security consulting services alongside a managed private deployment ensures that innovation does not come at the cost of a massive compliance breach.

The Equinix Advantage: What Carrier-Neutral Managed Private Cloud Delivers for AI

Understanding the need for a managed private cloud is the first step. The second is realizing that where that private cloud lives matters just as much as how it is built. This is where Accrets’ strategic partnership with Equinix serves as a critical differentiator for global enterprises.

Equinix is the world’s digital infrastructure company, providing globally interconnected data centers. By building managed AI infrastructure within Equinix, enterprises gain a “best of both worlds” scenario: the ironclad security of a private deployment combined with the high-speed connectivity usually reserved for public hyperscalers.

Here is why a carrier-neutral infrastructure is vital for AI:

First, it solves the latency problem. AI workloads often need to ingest data from various global sources in real-time. Equinix provides direct, private interconnections to thousands of network providers and cloud services, bypassing the congested public internet.

Second, it eliminates vendor lock-in. Because the infrastructure is carrier-neutral, you can seamlessly connect your private AI core to AWS for a specific tool, Azure for another, and your corporate network, without paying exorbitant egress fees.

This architecture allows organizations to run an on-premise private cloud environment that feels and scales like a massive global network, backed by Tier 3 and Tier 4 data center reliability. It is an enterprise-grade solution designed to handle the most aggressive, data-heavy AI strategies without buckling under the pressure.

Framework: How to Scope Your AI Infrastructure Requirements

Before committing to any deployment model or signing a vendor contract, IT leaders must rigorously scope their AI infrastructure requirements. Skipping this step leads to vastly over-provisioned systems (wasting money) or under-provisioned bottlenecks (stalling innovation). Use this step-by-step framework to evaluate your needs:

- Audit Your Workload Type: Are you training a foundational model from scratch (requires massive, sustained GPU clusters), fine-tuning an open-source model like Llama 3 (requires moderate, burst compute), or running daily inference (requires efficient CPU/GPU balance and low latency)?

- Calculate Data Gravity: Where does the data you need to feed your AI currently live? If your data is entirely on-premise, moving it to a public cloud for AI processing will incur massive costs. Infrastructure must be built close to where the data naturally resides.

- Define Your Compliance Posture: Document every regulatory standard your AI must meet (GDPR, HIPAA, MAS guidelines in Singapore, etc.). If multi-tenancy violates these policies, public cloud is immediately off the table for core workloads.

- Forecast Predictable vs. Unpredictable Compute: Separate the baseline compute you need 24/7 from the unpredictable spikes.

- Engage in Capacity Planning: Proper IT infrastructure capacity planning ensures that your network bandwidth and storage I/O can actually support the GPUs you intend to utilize.

By systematically evaluating these five pillars, enterprises can confidently design an infrastructure strategy that balances performance, cost, and security.

Conclusion & Your Next Steps

The era of defaulting to public hyperscalers for every IT initiative is ending, particularly when it comes to the intense, unpredictable demands of artificial intelligence. While public GPU rentals offer a temporary playground, serious enterprise AI requires the governance, cost-predictability, and architectural precision of managed private cloud infrastructure. By building on carrier-neutral platforms like Equinix, organizations can future-proof their AI investments, ensuring they have the raw power to innovate without sacrificing control.

Are you ready to architect an AI environment that actually fits your enterprise needs? Fill the form below for a free consultation with an Accrets Cloud Expert for AI infrastructure services:

Request an AI infrastructure assessment today.

Dandy Pradana is an Digital Marketer and tech enthusiast focused on driving digital growth through smart infrastructure and automation. Aligned with Accrets’ mission, he bridges marketing strategy and cloud technology to help businesses scale securely and efficiently.